The US Navy first took a ballistic missile to sea in 1947, when it fired a V-2 from the deck of the carrier Midway. But interest dried up quickly, as the US focused on aircraft as the main nuclear delivery platforms. To back these up, the Navy planned to use cruise missiles like Regulus instead of ballistic weapons. This began to change in the early 50s. The invention of the hydrogen bomb gave missile designers a warhead that could compensate for the inaccuracy of the weapon, and improvements in inertial navigation systems meant that missile accuracy improved significantly. The Navy was initially divided on the idea, with many fearful of the impact of such an expensive system on shipbuilding budgets, and little work was done until Admiral Arleigh Burke took office as Chief of Naval Operations in 1955.

A V-2 is fired from Midway

Burke was a strong proponent of the sea-based ballistic missile, but despite his swift actions on taking office, he was faced with a problem. Ballistic missiles had just been given the highest national priority, thanks to the work of the Killian Committee, which had laid the foundations of what became Mutually Assured Destruction. To limit competition in the new field, only four missile programs were to be authorized, and the limit had already been reached, with the Air Force's Atlas and Titan ICBMs and Thor Intermediate-Range Ballistic Missile (IRBM), and the Army's Jupiter IRBM, under development by Warner von Braun's team in Huntsville. If the Navy was going to get missiles to sea any time soon, it would need a partner. The Air Force, unhappy with the changes required to make Thor adaptable to maritime use, turned them down, while the Army, seeking to break the Air Force monopoly on ballistic missiles, agreed to the partnership in November 1955.

Arleigh Burke

To spearhead the effort and sidestep a jurisdictional battle between the Bureau of Aeronautics and the Bureau of Ordnance, Burke created a separate Special Projects Office to manage what was now known as the Fleet Ballistic Missile (FBM) program. To head it, he chose William Raborn, which proved an inspired choice. Burke gave him carte blanche to ransack the rest of the Navy for funds and personnel, and Raborn put them on a war footing, coming into the office himself on most Saturdays. Initially, their job was to make Jupiter into an effective weapon, with surface ships taking it to sea in 1960, and submarines in 1965. This proved difficult, as the first generation of ballistic missiles were not well-suited for naval use. The first problem was sheer size. To carry the necessary warhead, the missile would need to weigh somewhere over 100,000 lb, and the initial design was 95' long. The weight was manageable, but there was no way to fit a 95' missile into a vertical launcher on a submarine. The Navy wanted a missile no longer than 50', but eventually a compromise was reached, with the final missile being 58' long and 105" in diameter. The Jupiters would be stored in the sail, much as the Soviets did in their first SSBNs, and the submarine would have to surface to launch them.

A Jupiter

An even bigger problem was the choice of liquid oxygen and kerosene to propel Jupiter. The Navy was deeply opposed to liquid fuels aboard its ships due to the risk of leaks, and the use of cryogenic liquid oxygen meant that they would have to fuel the missiles shortly before launch, adding significant complications to operations. The Navy would have preferred to use solid-fuel rockets, and managed to convince the DoD to allow them to build a solid-fueled missile to carry the same 3,000 lb W38 warhead planned for Jupiter to the same 1,500 mile range. They called it Jupiter-S, and it would be slightly shorter than Jupiter, but heavier and even larger in diameter. While a step in the right direction, it was still too big and too heavy, and the SPO began looking at a 30,000 lb missile carrying a much lighter warhead.

William Raborn

This missile became the Navy's main focus from mid-1956, as the result of discussions held during Project Nobska, a conference held to discuss future ASW technology, particularly that required to counter the nuclear submarines that were just entering service. During discussions, Edward Teller, father of the H-bomb and deputy director of the University of California Radiation Laboratory, the nation's second atomic-bomb design facility, proposed a nuclear torpedo. When asked about the size of the warhead, he began to talk about the trends in warhead yield per unit weight, claiming that his group could produce a 1 MT warhead on only 400 lbs by the time the hypothetical missile could be ready. J. Carson Mark, Teller's equivalent from Los Alamos, disagreed with this claim, stating that he thought only 500 kT was achievable in the required timeframe. The Navy realized that no matter who was right, this was plenty adequate, and quickly began to focus development on the new missile, dubbed Polaris by Admiral Raborn. That December, he briefed Secretary of Defense Charles Wilson, showing a half-billion dollar saving for the new missile, because it was smaller and require fewer ships to deploy a given amount of firepower. This persuaded Wilson to give his blessing, and the Navy pulled out of the Jupiter program in favor of Polaris.

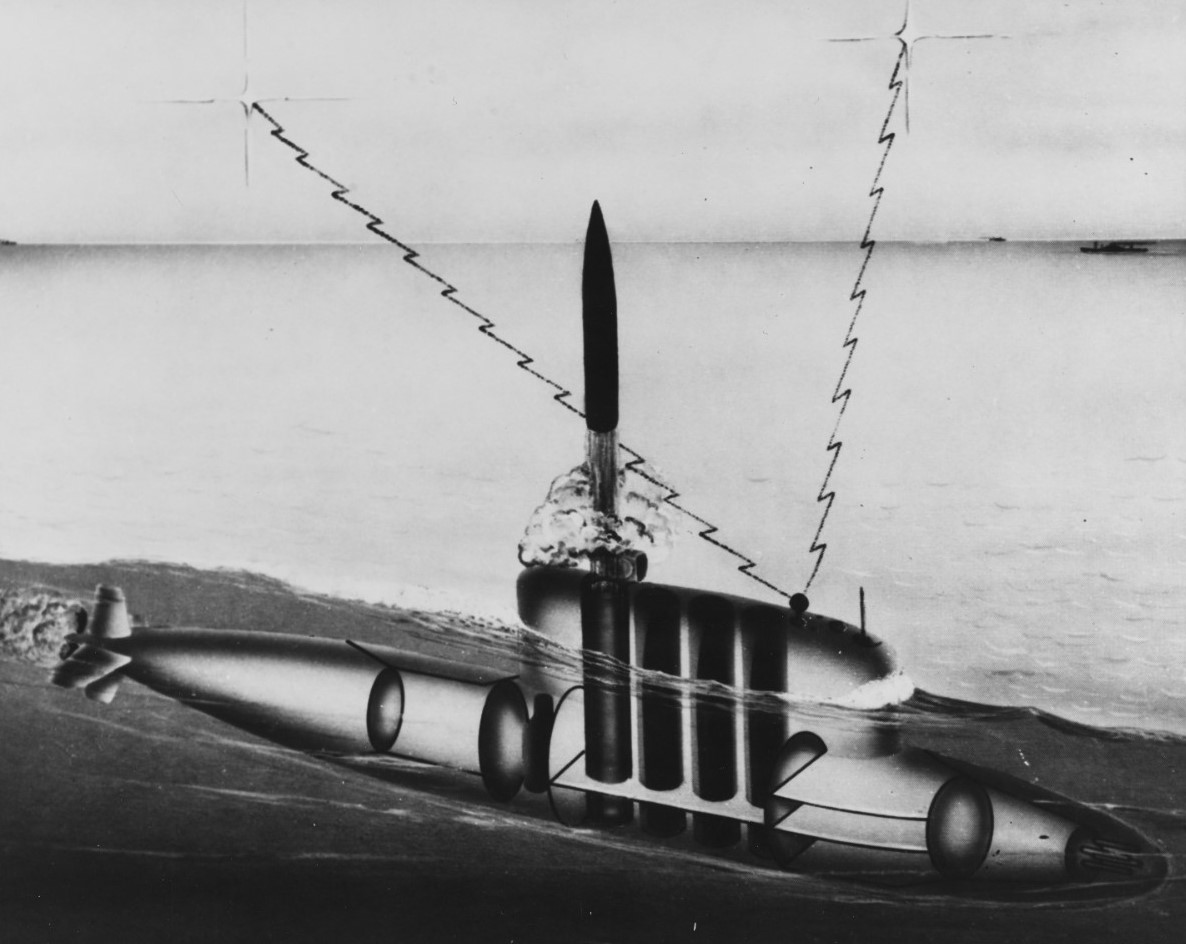

A Jupiter-equipped submarine launching missiles from the surface

The new missile marked a major change in the Navy's place in nuclear strategy. Previously, it had concentrated on hitting tactical targets, either to counter Soviet naval forces or support the land defense of Western Europe. Strategic nuclear targets were in the hands of the Air Force's Strategic Air Command (SAC), who built a force based around the idea of destroying Soviet warmaking capability. By the late 50s, this looked increasingly dubious due to new Soviet missiles, and Burke proposed an alternative. Polaris, installed in nuclear submarines, offered the ability to build a force that could survive a Soviet first strike and retaliate against Soviet cities instead of tactical targets. This reduced the demand for accuracy, and played to the strengths of the nuclear submarine. Aiding his push were new studies which suggested that the Air Force had greatly overestimated the number of bombs required to destroy the Soviet Union, and that a much smaller force was adequate.1

Edward Teller

Initially, the plan was to have an interim missile in service by 1963, with full capability in 1965, but the events of 1957 saw the project accelerated substantially, particularly in the wake of the first Soviet ICBM test that August, followed by the launch of Sputnik in October. By the end of the year, Raborn was able to promise that the first Polaris SSBN would be operational by the end of 1960, only four years after the program had formally started. While this seems incredibly ambitious, particularly given the pace of weapons procurement today, Raborn and the SPO pulled it off. We'll take a look at the systems they produced next time.

1 In fairness to SAC, it's very possible that they knew this, and were padding their estimates to make up for the inevitable friction of war. Saying that sort of thing is always dangerous around budget-cutting politicians, so they didn't. ⇑

Comments

So the army got stuck with a compromise design, but it's ok because the Air Force just stole it from them anyway.

Man, the poor army got screwed so bad for decades.

I wouldn't feel too bad for them. They got their revenge by dragging us into Vietnam.

We have a few famous examples of military tech development from WWII to about 1970 taking 1/10 the time of an equivalent modern project.

These missiles, the SR71, even up until the Apollo project.

So is this an issue like pharmaceuticals deal with? a combination of 1. The new product has to be better than the old product, so the standards it has to meet becomes more and more difficult to achieve. 2. Ever increasing safety standards.

Or is something else contributing?

Things for the DoD got much worse starting in 1961, when Robert McNamara became SecDef and brought several thousand tons of bureaucracy with him. That's a lot of it. More is just that, yeah, things became more complicated and we were working from a higher baseline.

echo:

Jupiter was apparently more portable than Thor.

It also formed the core of the Saturn I (aka Juno V) with a bunch of Redstone tanks and the first stage of the Juno II.

bean:

But wasn't Gulf of Tonkin a Naval battle?

bean:

But other countries also have the same problem and only the US appointed McNamara.

A big part of the change is the tendency to demand that any new system show a dramatic increase across every possible axis of performance. In the 1950s, the F-86 Sabre had the same weapons and sensor suite as a P-51 or late-model P-40, it was "just" a lot faster. The F-22 had to have stealth and supercruise and a new radar coupled to a new integrated combat suite with new electronic warfare systems and an IRST sensor, etc, etc. The costs for these changes add nonlinearly, and we might have gotten to the same place faster and cheaper if the F-22 was "just" a stealthy F15, the F-22C added the IRST and some of the electronics, the F-24 added supercruise and the F-26 added the rest of the fancy electronics.

Another issue is that defense contractors are a lot more willing now to deliver systems that meet the letter of the specifications but have obvious operational defects, which means a lot more effort goes into writing exhaustive, bulletproof specifications and then laboriously verifying compliance with all of them.

These two factors reinforce one another, in that demanding massive improvements across the board reduces the number of contractors with the resources to put in a credible bid, and reducing the number of contractors reduces the reputational penalty for being known as the guys who deliver technically-compliant but otherwise crappy and overpriced hardware. Everybody knows that Lockheed is going to try to rip off the government if they can get away with it, but everybody knows that Boeing is almost as bad and now there aren't North American, General Dynamics, Grumman, etc, etc, to turn to.

I once saw someone refer to Teller as the most dangerous Aspie ever, thanks to his ideas like the Backyard Bomb.

Not sure it's fair or even accurate, but it is amusing, in a way.

@John Schilling

What do you think of the potential for NGAD and similar agile manufacturing initiatives to change the procurement pictures?

That wasn't what got us into Vietnam. It provided the pretext for the escalation that the Army was already pushing, but there were already US troops in-country when it happened.

Re procurement, I think there's a serious chicken-and-egg problem going on. If we're only going to get one new system, then it can't fail (so we need detailed specs) and we need to cram every advance we want for the next 20 years into it. This started to become a problem in the mid-to-late 50s, and it was probably worse outside the US where budgets were more limited. McNamara definitely accelerated US progress in that direction.

@John

You left out BAE, who are the kings of overpromising and gaming specs.

In addition to John and Bean's comments, I think there's something to be said for operating at a lower level of technology being just easier in some ways. The F-86's initial engine weighed 2500lbs and produced ~5200 lbf of thrust. the F135 weighs ~3750lbs and produces about 30,000lbf without the afterburner. Squeezing 6x the power out of not that much more engine takes a lot of engineering. The F-86 didn't need to have suitable aerodynamics at sub-sonic, transsonic, and super sonic speeds because it could barely go transonic. It didn't need to have wings strong enough to carry 15,000lbs of fuel and weapons but light enough to still be agile, and so on. Working out all the necessary compromises to do all those things takes a lot of time and effort. And as much as I like to dump on McNamara, the fact that other countries (or even private companies) don't do dramatically better suggests that the problems are legitimately getting harder and it's not just our dumbassery that's getting in the way. Which isn't to say that we don't have plenty of dumbassery.

@bean

It's also organizational. When you're making a new plane every year, the radar team can say "nah, just use the old one for the 56 model, we're working on something for 58". But today if you say "nah, just use the APG-81 with extra modules for NGAD" then what do you do for the next decade?

@redrover

I think the big test is actually B-21, which the USAF took out of the normal procurement system and ran through the rapid fielding office, which was originally set up to do things like buy off the shelf scopes for infantry rifles. We know very little about B-21 development, but if it comes through more on time and budget than other air force projects, it'll be a big step towards proving that a less encumbered procurement process can work.

@john

This seems like a natural outgrowth of a more legalistic, specification driven system. In the old days if you showed up with a plane that met all the specs but couldn't actually fly, the program manager had the authority to say "fuck you I'm not going to pay". And if you showed up with a plane that didn't meet some spec but was otherwise fantastic he could say "actually this is pretty great, let's go with this." Today, they largely lack that sort of authority, and everyone has to follow the specs even if they're producing lousy results.

@cassander

Which circles back around to contract size. If you have one huge contract, with two possible contractors, the balance of power swings away from the program manager and specs become stricter as a means to produce some kind of accountability. This of course suits the large contractor just fine.

The solution imho is in conscious retreat back to incremental improvements in the way John is describing, but that's made difficult with the siren song of the big, game changing project sucking up all the oxygen. I'm sure we could find a dozen examples of someone asking for a small, useful improvement being told no don't worry about that, it will be included in the Big Project (TM).